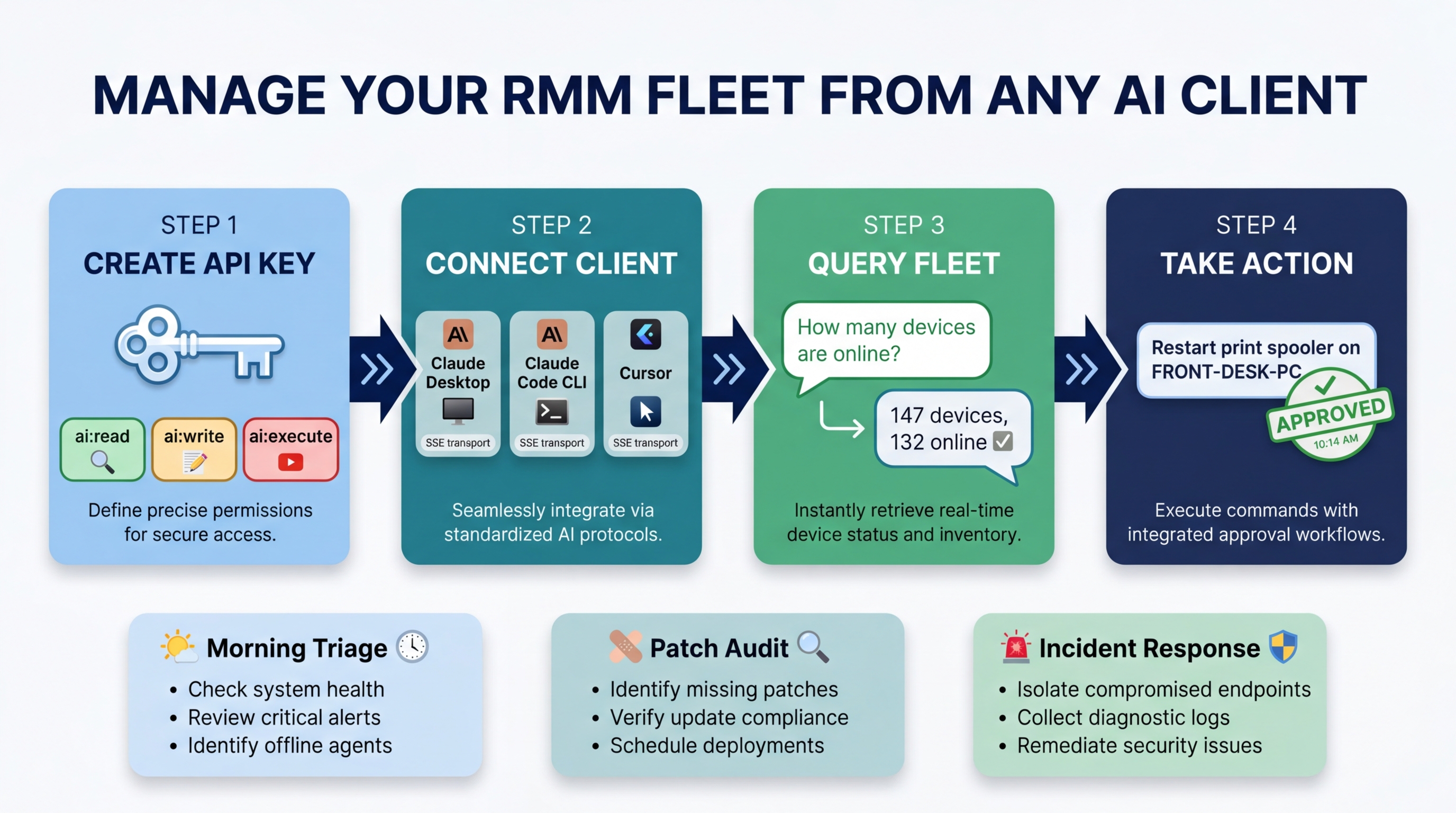

How to Use Your RMM From Claude Desktop (or Cursor)

You already spend half your day inside an AI assistant. Drafting emails, summarizing tickets, writing scripts. What if that same assistant could also query your device fleet, triage overnight alerts, and restart a stuck service on a client workstation — all without you switching to another browser tab?

With Breeze’s MCP server, that is exactly what happens. Claude Desktop, Claude Code, and Cursor become full RMM clients. You type a question in natural language, the AI calls Breeze tools over MCP, and you get real data back from your live fleet. No dashboard required.

This post walks through the setup from scratch. By the end, you will have Claude Desktop (or your preferred AI client) connected to your Breeze instance, running live queries against your endpoints.

What Is MCP (and Why It Matters Here)

MCP — Model Context Protocol — is the open standard that lets AI clients call external tools. Think of it as the bridge between “AI that talks” and “AI that does things.” When you connect Breeze to an MCP-capable client, the AI gains access to every Breeze tool: device queries, alert management, command execution, metric analysis, and more.

The key distinction: this is not a chatbot embedded in a web UI. This is your existing AI workflow tool gaining direct access to your RMM platform. You stay in the environment you already work in.

Prerequisites

Before you start, you need three things:

- A running Breeze RMM instance (self-hosted). Your API server must be accessible from the machine running your AI client. If you are running Breeze on a local network, the client needs to reach it.

- An API key with appropriate scopes. We will create this in Step 1.

- One of the following AI clients: Claude Desktop, Claude Code CLI, or Cursor.

That is it. No plugins to install, no extensions to configure, no middleware to deploy.

Step 1: Create an API Key

Navigate to Settings > API Keys in your Breeze admin panel. Click Create New Key.

You will be asked to select scopes. This is the most important decision in the entire setup, so take a moment to understand what each scope unlocks:

| Scope | What It Unlocks | Risk Level |

|---|---|---|

ai:read | Tier 1 tools only — query devices, view alerts, analyze metrics, pull reports | Low — read-only |

ai:write | Tier 1 + Tier 2 tools — alert acknowledgment, low-risk write operations | Medium — can modify alert state |

ai:execute | All tiers including Tier 3 — command execution, script runs, service management | High — can modify devices |

Start with ai:read. Test the integration, verify it works, get comfortable with the tool call flow. You can always upgrade the key later.

When you create the key, Breeze generates a token that starts with brz_. Copy it immediately — it is only shown once. Store it somewhere secure. This key authenticates every MCP tool call, and anyone who has it can perform any action the scopes allow.

Step 2: Connect Your AI Client

The setup differs slightly depending on which client you use. Pick the one that matches your workflow.

Option A: Claude Desktop

Claude Desktop is the most common setup. It gives you a conversational interface with tool confirmation dialogs for high-risk operations.

Open a terminal and run:

claude mcp add breeze-rmm \

--transport sse \

--url https://your-breeze-api.example.com/api/v1/mcp/sse \

--header "X-API-Key: brz_your_key_here"Here is what each flag does:

breeze-rmm— the name for this MCP connection (you will see it in the Claude Desktop tool picker)--transport sse— uses Server-Sent Events for streaming communication between Claude and Breeze--url— your Breeze API server’s MCP endpoint. Replaceyour-breeze-api.example.comwith your actual domain or IP.--header— passes your API key as an authentication header on every request

After running this command, restart Claude Desktop. You should see Breeze tools appear in the tool picker when you start a new conversation.

Option B: Claude Code CLI

If you work in the terminal, Claude Code CLI uses the exact same command:

claude mcp add breeze-rmm \

--transport sse \

--url https://your-breeze-api.example.com/api/v1/mcp/sse \

--header "X-API-Key: brz_your_key_here"The advantage of Claude Code for RMM work: you can chain natural language fleet queries with shell commands, scripts, and file operations in the same session. Ask Claude Code to pull device data from Breeze, pipe it into a script, and output a report — all without leaving the terminal.

Option C: Cursor

Cursor supports MCP through its settings. Open Cursor Settings and navigate to the MCP panel (or edit your MCP configuration directly). Add a new server with this configuration:

{

"mcpServers": {

"breeze-rmm": {

"url": "https://your-breeze-api.example.com/api/v1/mcp/sse",

"transport": "sse",

"headers": {

"X-API-Key": "brz_your_key_here"

}

}

}

}Cursor is the ideal setup for MSP engineers who also write automation scripts. You are already in an IDE — now you can query live fleet data inline while building PowerShell scripts, Python automations, or Breeze API integrations. Ask “what OS versions are running across the Contoso tenant?” and get real numbers without opening a browser.

Step 3: Your First Query

With the connection established, open your AI client and try a simple read-only interaction.

Type:

“How many devices are currently online in my fleet?”

Here is what happens behind the scenes:

- The AI determines it needs fleet data and selects the

list_devicestool. - It sends the tool call to Breeze over the MCP connection.

- Breeze validates your API key, checks the

ai:readscope (passes), enforces tenant isolation, and queries the device database. - The results stream back to the AI client.

- The AI formats the data into a readable summary.

You get something like:

“Your fleet has 147 devices total. 132 are currently online, 12 are offline, and 3 are in a pending state. The offline devices are primarily at the Contoso - Branch Office site.”

No dashboards opened. No filters applied. No clicking.

Try a second query:

“Show me all critical alerts from the last 24 hours”

The AI calls manage_alerts with severity and time filters. Breeze returns the data, and the AI presents a table of alert titles, affected devices, timestamps, and severities. You have an instant triage view without leaving your current window.

Step 4: Taking Action

Read-only queries are useful, but the real power comes when you upgrade to write and execute scopes. Update your API key to ai:write or ai:execute (in Settings > API Keys), then try these:

Tier 2 — Low-Risk Writes (Auto-Executes)

“Acknowledge all low-severity alerts older than 48 hours”

The AI calls manage_alerts with action: 'acknowledge', filtered by severity and age. This is a Tier 2 operation — it auto-executes immediately with full audit logging. No confirmation dialog.

Tier 3 — High-Impact Actions (Requires Confirmation)

“Restart the Windows Update service on ACME-PC-07”

The AI calls manage_services with action: 'restart' targeting a specific device. This is Tier 3. In Claude Desktop, a tool confirmation dialog appears before execution. You see exactly what will happen — the tool name, the target device, the action — and you approve or deny. In Claude Code CLI, the tool call is displayed and you confirm in the terminal.

How the Tier System Works

Breeze classifies every MCP tool call into risk tiers:

- Tier 1 (Read) — auto-runs, no confirmation needed. All query and analysis tools.

- Tier 2 (Low-Risk Write) — auto-runs with full audit logging. Alert management, status updates.

- Tier 3 (High-Impact) — requires confirmation in the AI client before executing. Command execution, service management, script runs.

The API key scope is the first gate. If your key only has ai:read, the AI cannot even attempt a Tier 2 or Tier 3 call — Breeze rejects it before the risk engine evaluates it. Scopes are hard boundaries, not suggestions.

Real Workflow Examples

Three practical workflows that MSP technicians use daily with this setup.

Morning Triage From Claude Desktop

You open Claude Desktop at 8 AM with a coffee. Type:

“Summarize overnight alerts, group by severity, and show which devices need attention first”

The AI calls manage_alerts to pull all alerts since your last session, then calls list_devices to cross-reference device status. It returns a structured triage report:

Critical (2): ACME-DC-01 disk at 97% (alert at 3:12 AM), Contoso-SQL-02 service ‘MSSQLSERVER’ stopped (alert at 4:47 AM).

High (5): Three devices failed patch deployment overnight. Two devices showing sustained high CPU since 2 AM.

Low (11): Standard threshold alerts — disk cleanup recommended on 6 devices, 5 certificate expiration warnings (30+ days out).

Recommended priority: ACME-DC-01 disk is the most urgent — a domain controller at 97% disk will cause replication failures. Contoso-SQL-02 service stop is second — production database may be unreachable.

You have a prioritized action plan before your first meeting. The entire interaction took twenty seconds.

Patch Audit From Cursor

You are writing a PowerShell automation script in Cursor and need to know the current state of Windows updates across a tenant. Without switching windows:

“What’s the Windows update compliance rate across the Contoso tenant?”

The AI queries Breeze, and you get real-time data inline:

“Contoso tenant: 89 devices. 74 (83%) are fully patched. 12 devices are missing 1-2 patches (mostly KB5034441, released 3 days ago). 3 devices have failed patch installations requiring manual review.”

You use those exact numbers in the script you are writing. Live fleet data feeding directly into automation development — no context switching.

Incident Response Chain

A client calls about a server that seems unresponsive. You type:

“Check if ACME-DC-01 is responding, show recent alerts, and pull the last 50 lines of the event log”

The AI executes multiple tool calls in sequence:

get_device_details— confirms the device is reporting as online but with elevated response times.manage_alerts— surfaces three critical alerts: disk space, failed DNS resolution, and Active Directory replication failure.execute_command— pulls the last 50 event log entries (Tier 3, so you confirm the tool call first).

The AI correlates the data:

“ACME-DC-01 is online but degraded. The root cause appears to be disk space — at 97% full, NTDS.dit cannot expand, which is causing AD replication failures, which in turn is causing DNS resolution failures. Recommend immediate disk cleanup followed by a forced AD replication cycle.”

Three tool calls, one coherent diagnosis. The alternative was opening the Breeze dashboard, navigating to the device, checking three different tabs, opening a remote session, running Get-EventLog manually, and correlating everything yourself. The MCP approach compressed that into a single natural language request.

Security and Governance

Connecting an external AI client to your RMM raises legitimate security questions. Here is how Breeze addresses them.

Every MCP tool call goes through the same risk engine as the web UI. There is no separate code path for MCP requests. The same tenant isolation, the same input validation, the same audit logging. MCP is an access method, not a privilege escalation.

API key scopes are the first gate. If the key does not have ai:execute, Tier 3 tools are blocked at the authentication layer — before the risk engine even evaluates the request. Scopes are enforced server-side. The AI client cannot override them.

Rate limits apply per-key. Default limits are 30 SSE connections per minute and 120 messages per minute per API key. These are configurable via environment variables (MCP_SSE_RATE_LIMIT_PER_MINUTE, MCP_MESSAGE_RATE_LIMIT_PER_MINUTE). A runaway AI client or a compromised key cannot flood your instance.

All actions are logged in the audit trail. Every tool call — including the input parameters, the caller’s API key ID, the risk tier, and the execution result — is recorded. You can review MCP activity the same way you review web UI activity: through the audit log or by asking the AI itself (“Show me all MCP tool executions from the past 24 hours”).

For production hardening, two additional environment variables tighten the controls:

MCP_EXECUTE_TOOL_ALLOWLIST— restricts which Tier 3 tools are available via MCP. Set it to a comma-separated list of tool names (e.g.,execute_command,manage_services). When this is set, only the listed tools can execute. An empty value in production means all Tier 3 tools are denied by default.MCP_REQUIRE_EXECUTE_ADMIN— when set totrue, Tier 3 tools require theai:execute_adminscope in addition toai:execute. This lets you create API keys that can read and manage alerts via MCP but cannot run commands, even if they haveai:execute.

Tips and Gotchas

A few things that will save you time:

Start with read-only keys and test before upgrading. This is not just a safety recommendation — it is a debugging strategy. If ai:read queries work, you know the connection, authentication, and tenant isolation are all functioning. If a write operation fails later, you can narrow the issue to scope configuration.

MCP tool calls skip the web UI approval flow. In the Breeze web UI, Tier 3 actions trigger an in-app approval dialog. Over MCP, the AI client handles confirmation through its own UX (Claude Desktop shows a tool confirmation dialog, Claude Code shows the tool call in the terminal). The approval still happens — it just happens in the client, not in the Breeze UI.

If a tool call fails, check API key scopes first. The most common failure mode is calling a Tier 2 or Tier 3 tool with an ai:read key. Breeze returns a clear error, but the AI may not always surface the exact scope mismatch. Check your key scopes in Settings > API Keys before debugging further.

SSE connections have a session limit per key. Default is 5 concurrent SSE sessions per API key (configurable via MCP_MAX_SSE_SESSIONS_PER_KEY). If you are connecting from multiple machines or clients with the same key, you may hit this limit. Create separate keys for separate clients — it is better for audit trails anyway.

Keep your Breeze instance updated. MCP tool definitions evolve as Breeze adds features. Updating Breeze automatically updates the tools available to your AI client. No reconfiguration needed on the client side.

What Comes Next

This tutorial covers the fundamentals: creating a key, connecting a client, running queries, and taking action. For deeper dives into specific aspects of Breeze AI and MCP:

- MCP Server feature page — full feature overview of the MCP integration, including all available tools and transport options

- Configuring Breeze AI: The Self-Hoster’s Guide — every configuration option, including budget management, RBAC mapping, and production lockdown

- What Breeze AI Can Actually Do For Your Help Desk — more workflow examples and a deeper look at the tool tier system

The setup takes five minutes. The first time your AI assistant pulls live fleet data and saves you a context switch, you will not go back to tab-hopping between your RMM dashboard and your AI client. They are the same thing now.