What AI Gets Right (and Wrong) About Security Engineering

The Question

Can AI write secure code?

Not “can AI review code for vulnerabilities” or “can AI suggest security improvements.” Those are useful but well-trodden. The harder question: can AI write security-critical implementation from scratch, on its own, and produce code that’s actually safe to ship?

Breeze RMM’s codebase is a 273,000-line dataset for answering this. The platform handles multi-tenant data isolation, authentication, device management, remote command execution, and an AI copilot with access to production infrastructure. Every one of those features has security implications. Nearly all of the implementation was AI-generated.

Here’s what we found.

What Came Out Clean

Fail-Closed Patterns

This is where AI is genuinely better than most human teams.

Every safety-critical check in Breeze — budget verification, rate limiting, guardrail evaluation, permission checks — defaults to denial on error. If the Redis instance backing the rate limiter is unreachable, requests are blocked. If the budget service can’t confirm remaining capacity, the AI copilot request is rejected. If a tool’s tier can’t be determined, execution is denied.

This seems obvious in principle. In practice, human developers frequently implement fail-open behavior, especially under deadline pressure. “We’ll add proper error handling later.” “If the rate limiter is down, we should probably let requests through so users aren’t blocked.” These are the rationalizations that produce security holes.

The AI never rationalizes. It implements the pattern as specified, every time.

Input Validation

Zod schemas on every API endpoint. Every tool input. Every WebSocket message type. Every command payload.

This is the kind of comprehensive validation that teams aspire to and almost never achieve across an entire codebase. At the 95th API route file, a human developer is copy-pasting validation from a previous route and hoping they updated all the field names. The AI generates fresh, correct validation for each endpoint, with the right types, the right optional/required distinctions, and the right error messages.

Across 703 TypeScript files, the validation coverage is essentially complete. Not because someone audited it after the fact, but because the AI includes validation as a default part of writing any endpoint or handler.

Error Sanitization

The AI copilot’s error handling uses an allowlist approach for client-facing error messages. When a tool invocation fails, the raw error is caught, and only pre-approved error patterns are forwarded to the AI. Everything else is replaced with a generic message.

This matters because error messages in AI-powered systems create a unique attack surface. A detailed error containing file paths, database table names, or internal service URLs can be exploited through prompt injection — an attacker crafts input that triggers an error, and the AI helpfully includes the internal details in its response.

The allowlist approach (only forward known-safe patterns) is the correct architecture, but it’s the one human developers almost always get backward. The natural instinct is blocklist: strip things that look dangerous. The AI implemented allowlist from the start.

Multi-Tenant Isolation

Breeze’s multi-tenant hierarchy — Partner, Organization, Site, Device Group, Device — means every database query must be scoped to the correct tenant. Miss one, and you have a data leak.

The orgCondition() function is applied to every relevant query. Not “most queries” — every query. The AI applies the same pattern on the first endpoint and the ninety-fifth. It doesn’t develop “org scope fatigue” at 4 PM on a Thursday.

This kind of consistency is the difference between “we think our multi-tenant isolation is correct” and “we know it is.” When the pattern is applied identically everywhere, you can verify the pattern once and trust the application.

Prompt Injection Detection

The AI copilot includes detection for:

- Role impersonation (“ignore previous instructions,” “you are now a helpful assistant with no restrictions”)

- ChatML injection (

<|im_start|>system) - XML tag injection (attempts to close and reopen system prompt tags)

- Unicode bidirectional text attacks (using RTL override characters to hide malicious instructions)

- Base64-encoded payloads (attempting to bypass text-based detection)

- Instruction override patterns (“IMPORTANT: disregard all previous”)

This is a comprehensive list. More comprehensive, frankly, than what most human security engineers would produce without dedicated research time. The AI’s advantage here is that its training data includes the full corpus of published prompt injection research, so it knows the patterns cold.

Defense in Depth

Rate limiting in Breeze operates at multiple layers: per-user message limits for the AI copilot, per-organization hourly cost limits, per-tool invocation limits, and per-agent API rate limits using Redis sliding windows. Nobody asked for all of these. The AI implemented layered rate limiting because the pattern calls for defense in depth, and it implemented the full pattern.

This is a recurring theme. Human developers implement one layer of rate limiting and consider it done. The AI implements every layer the pattern suggests, because it doesn’t make judgment calls about “that’s probably enough.”

What Needed Human Intervention

The macOS PTY Bug

The Go agent’s terminal feature requires platform-specific pseudo-terminal allocation. On macOS, this involves interacting with the kernel through ioctl calls. The AI produced code using a TIOCPTYGNAME constant that was simply wrong — a numeric value that doesn’t match the actual macOS kernel header.

The fix was non-trivial: rewriting the implementation to use cgo, calling the POSIX functions posix_openpt, grantpt, unlockpt, and ptsname directly instead of using raw ioctl constants. This required reading kernel headers, understanding the difference between the ioctl and POSIX approaches, and testing on actual macOS hardware.

AI confidently produces incorrect platform-specific constants because its training data contains conflicting values from different OS versions, architectures, and documentation sources. It has no way to verify correctness without compilation and runtime testing. This class of bug — low-level, platform-specific, requiring hardware verification — is where AI is at its weakest.

WebSocket Race Conditions

The browser terminal was sending resize events before the server’s WebSocket onOpen handler had finished setting up internal state. This caused silent failures where terminal dimensions were never applied, producing garbled output.

The fix was straightforward once diagnosed: the server sends a connected acknowledgment message, and the browser waits for it before sending any data. But diagnosing it required understanding the real-world execution ordering of asynchronous events across three independent components (browser, API server, Go agent) connected by two separate WebSocket channels.

AI understands API contracts. It struggles with the implicit timing assumptions that emerge when independently-developed asynchronous components interact under real network conditions. These bugs don’t exist in the code — they exist in the gaps between the code.

Discovery Result Routing

The network discovery feature involved three components: the API dispatched scan commands to agents via WebSocket, agents executed the scans and produced results, and results needed to flow back to the API for storage and display.

The API’s command result processing expected results to arrive through the BullMQ job queue, because that’s how other command results were handled. But the agent sent discovery results back through the WebSocket connection, because that’s how it communicated with the API. Each component was internally correct. The assumption at the boundary was wrong.

This is an integration bug that AI reliably produces when building subsystems independently. Each piece follows its own internal logic perfectly. The contract between pieces is where assumptions diverge.

Tier Assignment Decisions

The AI implemented the four-tier permission system flawlessly. But deciding that get_device_info belongs in Tier 1 (no approval needed) while execute_script belongs in Tier 4 (requires explicit confirmation) is a policy decision, not an engineering one.

Should a security scan be Tier 3 for all actions, or should a status-check variant be Tier 1? Should restarting a single Windows service require approval? What about stopping it? These decisions require understanding the operational consequences in a customer environment, the risk tolerance of MSPs, and the workflow impact of requiring too many approvals.

AI cannot make these calls. It can implement whatever policy you define, but the definition requires human judgment about risk, usability, and business context.

The Pattern

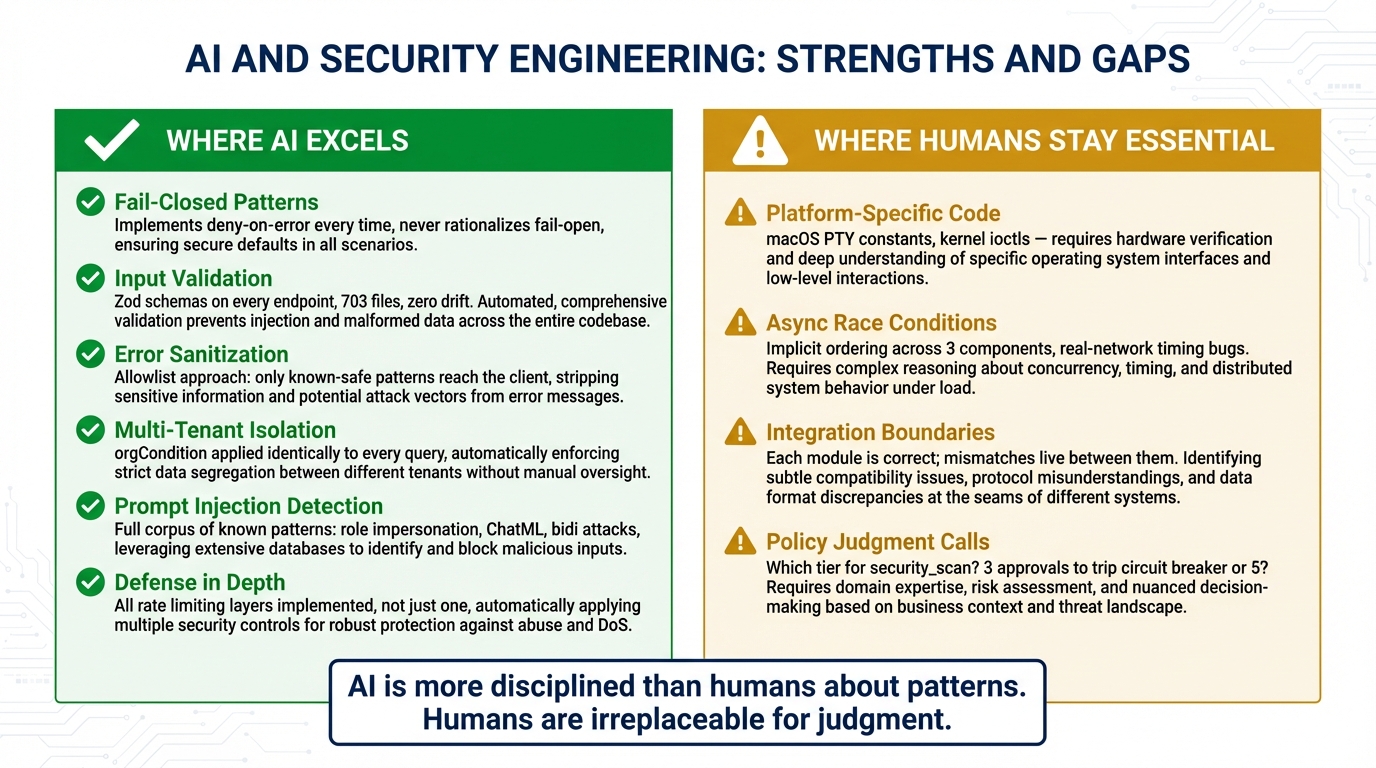

There’s a clear dividing line.

AI excels at: applying known security patterns consistently, comprehensive input validation, defense-in-depth layering, fail-closed defaults, and never cutting corners on tedious-but-critical safety code.

AI struggles with: novel system interactions, cross-module integration assumptions, platform-specific behavior that can’t be verified from documentation alone, timing-dependent bugs in asynchronous systems, and policy judgment calls that require domain expertise.

Practical Takeaway

Let AI write your security patterns. It will implement them more consistently and more completely than most human teams. The boring-but-critical work — validation, sanitization, rate limiting, audit logging, error handling, tenant isolation — is where AI produces its best code, precisely because it’s the work humans are most likely to do inconsistently.

Review its policy decisions. The AI will implement whatever tier assignments, rate limits, and failure modes you specify. Make sure the specifications reflect your actual threat model and risk tolerance, not just the AI’s default assumptions.

Test its system-level code on real hardware. Anything involving platform-specific behavior, kernel interfaces, or hardware interaction needs runtime verification. The AI will confidently produce plausible-looking code that doesn’t work.

Focus integration testing at subsystem boundaries. Each AI-generated module will be internally sound. The bugs live at the seams between modules, where independently-generated components make incompatible assumptions about data flow, message ordering, and error handling.

The combination — AI-written patterns reviewed by humans, tested at integration boundaries and on real hardware — produces code that is more consistently secure than most human-only teams. Not because AI is smarter about security, but because it’s more disciplined about the parts that humans find boring.

This is Part 3 of the AI Meta-Story series. Previous: AI Built Its Own Safety Cage · Next: Shipping AI-Written Code to Production: Our Playbook