Why Your Clients Keep Failing CIS Assessments (And Why It Is Not Their Fault)

The MSP owner walked into the assessment confident. Their client had endpoint protection on every device, a managed firewall, centralized logging through their RMM, and a documented configuration baseline they’d set up eighteen months ago. They had done the work. They had the stack.

They failed CIS Control 4.

The assessor wasn’t disputing that the tools existed. The problem was evidence. Ninety days of continuous proof that every device in scope was running a hardened configuration — not just the configuration they’d deployed on the initial setup, but the configuration as it existed today, on each device, verified continuously over the audit window. They couldn’t produce it. The snapshots they had were from quarterly manual checks. Three of those devices had been reimaged, two more had had software installed that changed the baseline, and one had a misconfigured GPO that had been quietly out of compliance for six weeks.

They had the capability. They didn’t have the proof. And in a CIS assessment, those are two different things.

The Assessment Snapshot Problem

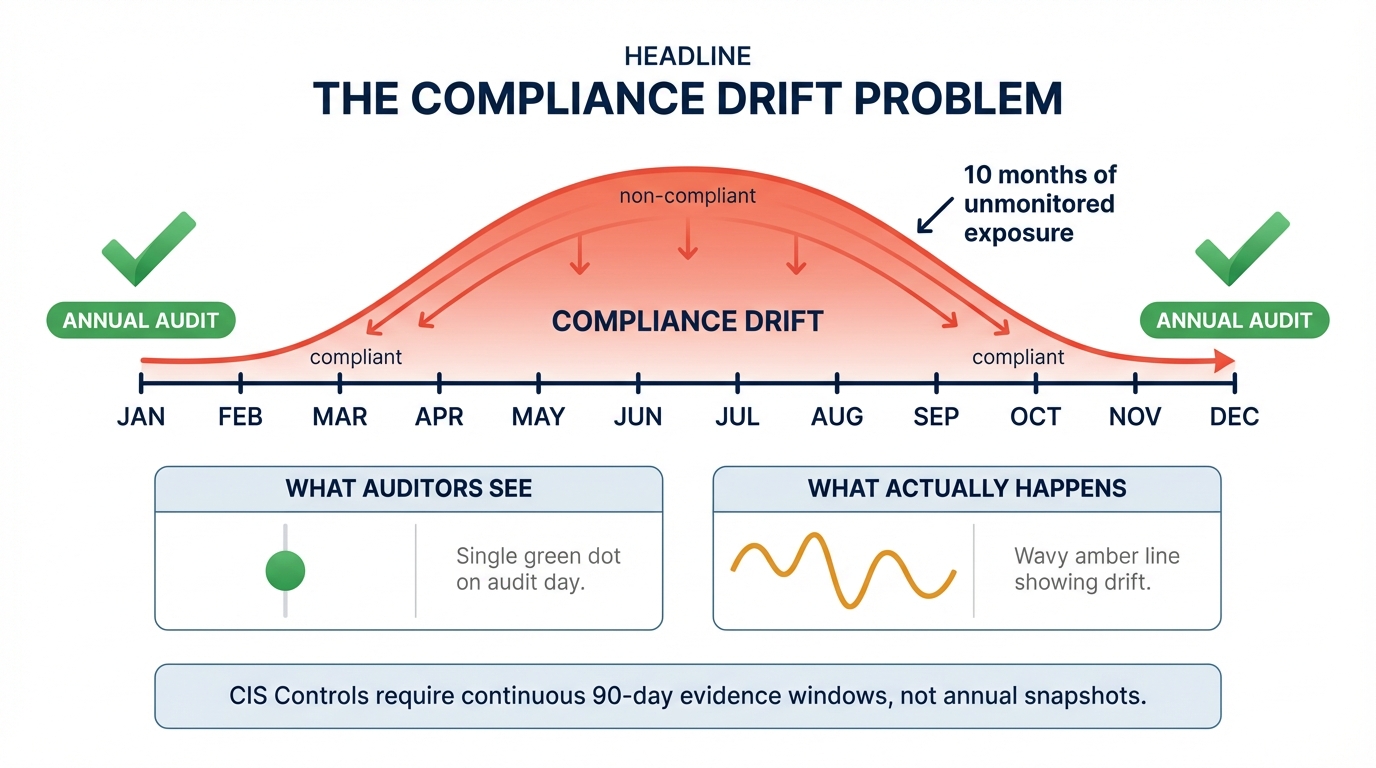

Most MSPs think of compliance as a state: either a device is compliant or it isn’t. Pass the assessment, move on. This model made sense when CIS assessments were annual paper exercises run by consultants with clipboards.

CIS Controls v8 was designed around a different premise — that configuration drift is continuous and inevitable, and that “compliant” is not a state you achieve once, it’s a condition you maintain and can demonstrate over time. The language in the framework reflects this explicitly. Control 1 calls for an “enterprise asset inventory” that is “actively maintained.” Control 4 requires that organizations “establish and maintain” a secure configuration process — present tense, ongoing. Control 8 specifies that audit logs must be “retained” for a defined period and be “searchable.”

This means an assessor isn’t asking whether you could theoretically check these things. They’re asking whether you actually did, continuously, and whether you kept the receipts.

A device that passes a compliance check on a Monday morning can fail by Thursday afternoon. A Windows update can re-enable a disabled service. A user can install software that modifies registry settings. An application deployment can overwrite a hardened configuration file. A new device gets onboarded but the GPO assignment doesn’t get applied correctly. These aren’t hypothetical edge cases — they’re the ordinary texture of managing a Windows fleet at any scale.

The gap between your hardening baseline and your current device state grows every day without active enforcement. Point-in-time snapshots don’t close that gap. They give you a photograph of what things looked like when you were paying attention.

Why Dashboards Don’t Scale

The conventional response to this problem is the compliance dashboard. Every major RMM has one. Patch status, endpoint protection, disk encryption — color-coded, exportable, reassuring. The problem isn’t that these dashboards are wrong. The problem is what they expect a human being to do with the data.

A compliance dashboard surfaces signals. Acting on those signals — investigating the device, determining the cause of the drift, remediating the issue, and documenting the remediation — requires a technician. Every single signal, every single time.

The average MSP technician manages somewhere between 150 and 300 devices. If each device generates even one compliance signal per week that requires human review, that’s 150 to 300 manual investigations per technician per week, before they’ve handled a single help desk ticket, onboarded a new client, or dealt with an actual incident. The math doesn’t work. It has never worked.

What actually happens — what has to happen, given the constraints — is triage by noise level. The signals that are loud enough, or frequent enough, or attached to a client who complains, get attention. The quiet drift, the gradual misconfiguration, the device that has been slightly out of compliance for three months without triggering anything, gets missed. Not because the technician is negligent. Because there are only so many hours in a day and 200 devices to manage.

This is a tooling design problem, not a people problem. It’s the predictable outcome of building systems that surface data to humans and expect humans to act on all of it.

What “Coverage” Actually Means in CIS v8

There’s a phrase that shows up constantly in MSP compliance conversations: “We have a tool for that.” Endpoint protection for Control 10, a SIEM for Control 8, MFA enforcement for Control 6. The implication is that having the capability is equivalent to meeting the control.

It isn’t.

CIS Control 1, Inventory and Control of Enterprise Assets, requires not just that you have a discovery tool, but that every device in scope is identified, catalogued, and that the inventory is current. If a new device joins the network and doesn’t appear in your inventory within a defined window, you’re not meeting the control — regardless of whether your RMM could theoretically scan for it. The control is about what’s actually happening, continuously, not what’s theoretically possible.

CIS Control 4 is where this distinction hurts the most. The control covers secure configuration of enterprise assets. Meeting it requires that you can demonstrate, with evidence, that your configuration baseline is applied to every device in scope and that deviations are detected and remediated. Not once, not quarterly, but as an ongoing process. An assessor can reasonably ask: show me a device that drifted from baseline in the last 90 days, show me when it was detected, and show me when it was remediated. If you can’t answer that question with timestamps and evidence, you’re not meeting the control — even if you have a configuration management tool, even if you have a policy, even if you did the initial hardening.

Control 8, audit log management, is similarly exacting. “We have logging enabled” is not the same as “we have centralized, searchable, retained logs that meet the defined retention period with documented coverage across every device in scope.” The first is a configuration. The second is a demonstrable, continuous process.

The difference between having a tool and meeting a control is evidence of continuous enforcement. Most MSPs have the tools. Very few have the evidence.

The Assessment Failure Pattern

Assessors don’t fail clients for not having the right products. The vendors in this space have done an effective job of making sure most MSPs have at least the right category of tools for each control area. The failures come from the gap between capability and evidence.

The pattern looks like this: the MSP invested in the right tools, deployed them, and then relied on their technicians to stay on top of the signal output. At 150 to 300 devices per technician, systematic coverage is impossible. Drift accumulates in the quiet corners — the devices that aren’t generating alerts, the controls that look fine on the dashboard but haven’t been deeply verified since the initial deployment. When the assessment window arrives and the assessor asks for 90 days of continuous evidence, those quiet corners become visible.

The failure isn’t a failure of investment or intention. It’s a failure of enforcement at scale, which is ultimately a failure of tooling design.

The Design Goal That’s Missing

RMMs were built to help technicians manage devices more efficiently. That’s a valid and important design goal. It produced a generation of tools that are very good at making device management faster for humans — surfacing data, batching operations, centralizing visibility.

But continuous compliance enforcement isn’t a device management problem. It’s a policy enforcement problem. The question isn’t “can a technician find out whether this device is compliant?” — it’s “is this device compliant right now, and will it be brought back into compliance automatically if it drifts, without requiring a technician to act on a signal?”

Those are different design goals. A tool designed for the first goal will surface alerts. A tool designed for the second goal will act on them. The distinction determines whether your compliance posture depends on your technicians’ capacity or on your platform’s capabilities.

At scale — 50 clients, 2,000 devices, three technicians — only one of those designs produces continuous, demonstrable compliance. The other produces very detailed visibility into how many ways you’re failing.

What Changes When Enforcement Is Automatic

The shift from “we surface data” to “we enforce policy” changes the compliance conversation entirely. When a device drifts from its hardened configuration baseline, the platform detects it, triggers remediation, and logs the detection, the remediation action, and the timestamp — automatically. The technician doesn’t need to see the signal. The control is met because the system enforced it, not because someone was paying attention.

That’s what continuous evidence looks like. Not a dashboard screenshot. Not a quarterly report. A timestamped record of every detection and every enforcement action, across every device, over the full audit window.

This is the gap Breeze was designed to close. The compliance posture isn’t something a technician maintains by staying on top of their queue. It’s something the platform maintains by enforcing policy continuously and producing the evidence trail that demonstrates it was enforced. When an assessor asks for 90 days of evidence for CIS Control 4, the answer isn’t a screenshot and a conversation — it’s a report.

MSPs already have most of the tools they need to meet CIS Controls. The missing piece is the enforcement layer that makes those tools produce evidence of continuous operation rather than capability. If you’re building toward a client assessment and you’re not sure whether you can produce that evidence, the time to find out is before the assessor asks the question.