32 Data Pipelines, 18 Security Controls: How Feature Design Maps to Compliance

When we laid out Breeze’s feature set, we did not start by asking what features MSPs want. We started by asking what data an AI brain needs to make good decisions. Then we mapped that data to CIS Controls and discovered something that should not have been surprising but was: a brain-centric feature design and a CIS-complete RMM are almost the same thing. The data that makes the brain smarter is the same data that proves compliance.

That convergence is not a coincidence. It is a consequence of a specific claim about what makes AI reasoning in infrastructure management useful versus theatrical. An AI that reasons over stale, incomplete, or unstructured data produces confident-sounding answers that do not reflect reality. An AI that reasons over a continuous, structured record of device state — software versions, network identities, privilege events, configuration drift — can make decisions a technician would actually trust. And it turns out that a continuous, structured record of device state is exactly what the CIS Controls are designed to ensure you maintain.

The implication is worth being explicit about: CIS Controls coverage is determined at feature design time. Not at audit time. Not at configuration time. Not by purchasing a compliance module after the fact. If you did not build the IP history pipeline, you cannot cover CIS 1 forensically. If you did not build the browser extension inventory, you cannot cover CIS 9. The architectural decisions made when designing each data pipeline are the compliance decisions — they just are not usually framed that way.

This post walks through how that works in practice, using one feature as a worked example before mapping the full picture.

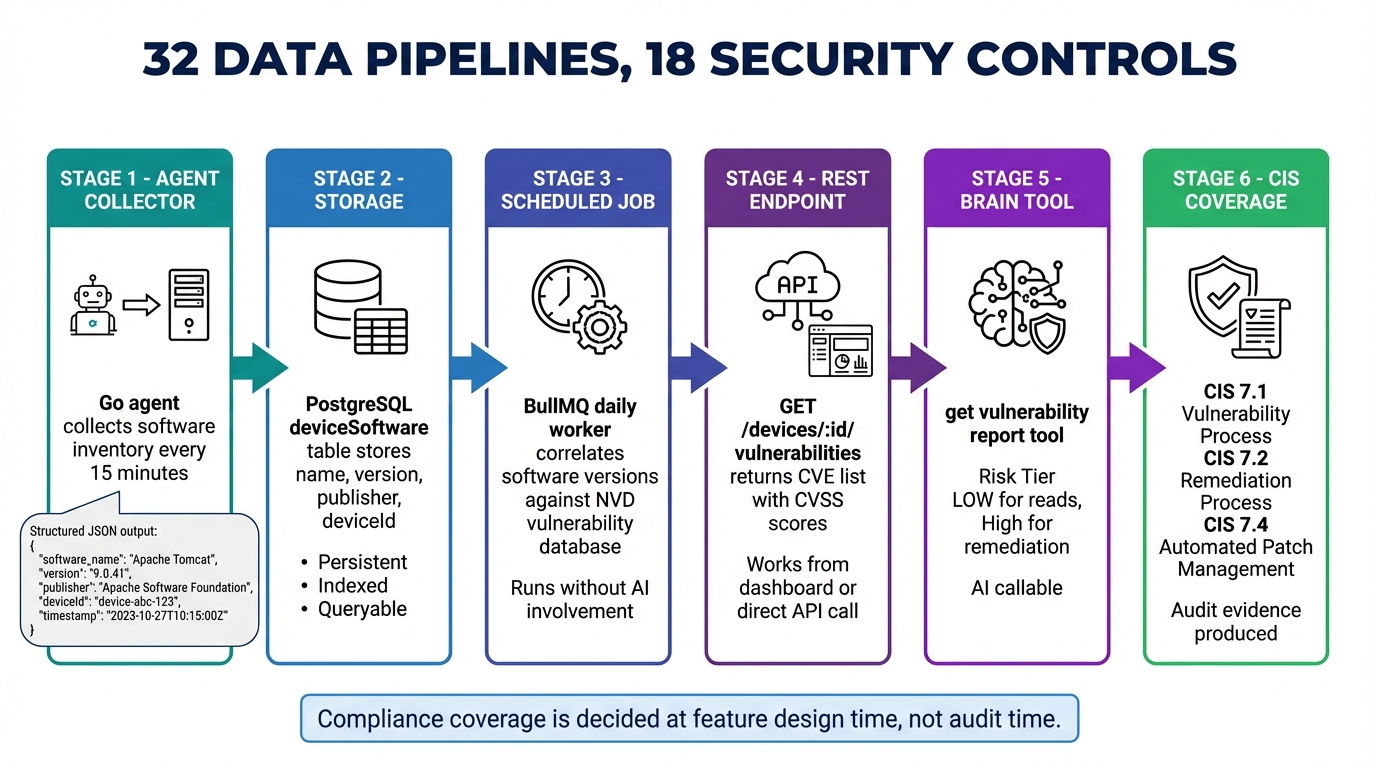

The Full Chain: Vulnerability Management (BE-16)

To make the argument concrete, it helps to follow a single feature from agent collector to CIS coverage, without skipping steps.

Step 1 — Agent collector (no new collector required)

The agent already collects software inventory every 15 minutes as part of its baseline telemetry cycle:

{"name": "Apache Tomcat", "version": "9.0.41", "publisher": "Apache Software Foundation"}This data exists because the brain needs to know what software is running on a device to answer questions about it. That same data is the input to vulnerability correlation.

Step 2 — Storage

The existing deviceSoftware table stores deviceId, name, version, publisher, and installedAt. Nothing new is needed here. The pipeline is already running.

Step 3 — New database tables for vulnerabilities

Two new tables are required. The first is a local mirror of the NVD dataset:

vulnerabilityDatabase: cveId, cvssScore, severity, affectedSoftware (jsonb),

publishedDate, exploitAvailable, patchAvailableThe second tracks per-device exposure:

deviceVulnerabilities: deviceId, cveId, softwareName, softwareVersion,

status (open|patched|mitigated|accepted), detectedAtStep 4 — Scheduled correlation job

A BullMQ worker runs daily. It fetches NVD feed updates via the public API, then correlates (softwareName, version) tuples from deviceSoftware against vulnerabilityDatabase. Matches are written to deviceVulnerabilities with CVSS scores and exploit availability flags.

This job runs whether or not the brain is online. The results are stored. They do not disappear when the conversation ends.

Step 5 — API endpoint

GET /devices/:id/vulnerabilitiesReturns a CVE list sorted by CVSS score, with patch availability and exploit status. This endpoint works without the brain. Dashboards use it. Alert rules fire on it. A technician can pull it directly via curl if they want to. The data is queryable, persistent, and human-readable without any AI involvement.

Step 6 — Brain tools

Two tools wrap the API:

get_vulnerability_report calls GET /api/v1/vulnerabilities?org=... and returns a fleet-wide CVE summary sorted by severity. Risk tier: Low. It is read-only, and the brain calls it often — when assessing a device, when a critical CVE event fires, when a technician asks about exposure.

remediate_vulnerability calls POST /api/v1/patches/deploy. Risk tier: High outside maintenance windows, Medium within them. The brain requests approval before executing outside approved windows.

Step 7 — CIS Control coverage

The pipeline above produces coverage across three CIS 7 sub-controls:

- CIS 7.1: Establish a vulnerability management process — the daily correlation job and

deviceVulnerabilitiestable constitute this process - CIS 7.2: Establish a remediation process —

remediate_vulnerabilitywith its approval workflow is the remediation process - CIS 7.4: Perform automated application patch management — the brain triggering patch deployment against known CVEs is the automation

The Design Question That Determines Compliance

At Step 1, the team faced a choice that most teams do not recognize as a compliance decision: does the brain need to know what software versions are running, correlated with known vulnerabilities?

Yes. Therefore: software inventory is collected continuously at the agent level, NVD correlation runs on a schedule at the API level, results are stored in PostgreSQL, exposed via REST, and wrapped as brain tools.

That sequence of yes-es at feature design time is what produces CIS 7 coverage.

Now consider the alternative. If the team had decided instead to let the brain run vulnerability scans on demand via script execution: you get CIS 7 coverage only when the brain is active, with no historical exposure data, at significant token cost per query, with no dashboard for humans, with no alert capability, and with no audit trail that persists across sessions. An auditor asking for evidence of a vulnerability management process would get a transcript of a chat conversation, if anyone saved it.

The same logic applies across every control in the framework. The architectural decisions are the compliance decisions. The compliance decisions are made at feature design time.

The Event Stream as the Compliance Detection Layer

Persistent data covers the historical record. But compliance is also about real-time detection: knowing when a new device appears on the network, when a critical CVE is introduced, when software violates policy.

Every BE feature in Breeze emits typed events into a stream the brain subscribes to. These are not notification events that trigger a UI badge. They are the compliance detection layer:

// Events emitted by BE-16 (Vulnerability Management)

"vulnerability.critical_detected" → {deviceId, cveId, cvssScore, exploitAvailable}

// Events emitted by BE-18 (New Device Alerting)

"network.new_device" → {ipAddress, macAddress, hostname, openPorts}

"network.rogue_device" → {ipAddress, macAddress, manufacturer, openPorts}

// Events emitted by BE-15 (Application Whitelisting)

"compliance.software_violation" → {deviceId, softwareName, policyId, violationType}When vulnerability.critical_detected fires, the brain wakes up, calls get_vulnerability_report, evaluates whether to remediate immediately or schedule for maintenance, and either acts or flags for technician review. The detection-to-decision cycle happens in seconds. Without the event stream, the brain would need to poll continuously — expensive, slow, and architecturally backwards. The RMM should push state changes to the brain, not the other way around.

The event stream is also what makes the brain’s coverage of CIS controls active rather than passive. A brain that only answers questions when asked is an expensive search interface. A brain that subscribes to typed events and acts on them when thresholds are crossed is a continuous compliance monitor.

The Tool Catalog as the Compliance Action Layer

Detection without remediation does not satisfy most CIS controls. The controls specify that you establish processes — not just visibility, but the capability to respond. The brain’s tool catalog is where that capability lives.

Each remediation tool maps to the CIS control it addresses:

| Tool | CIS Control | Risk Tier |

|---|---|---|

apply_cis_remediation | CIS 4 | High |

remediate_vulnerability | CIS 7 | High / Medium |

apply_audit_baseline | CIS 8 | High |

execute_containment | CIS 17 | High |

manage_software_policy | CIS 2 | Medium / High |

scan_sensitive_data | CIS 3 | Medium |

Risk tiers govern when the brain acts autonomously versus when it requests human approval. High-tier tools require explicit approval or must be executed within a pre-approved maintenance window. This is not an arbitrary constraint — it is how you build a system that is auditable. Every tool call is logged. Every approval is recorded. The audit trail for CIS compliance is a byproduct of normal operations.

The Compliance Gaps That Are Genuinely Hard

Claiming comprehensive CIS coverage requires being honest about where the coverage is real and where it is thin.

CIS 14 (Security Awareness Training): Breeze tracks user risk scores — phishing click rates from email security integrations, security training completion from platform APIs, behavioral indicators from session and privilege data. The monitoring side is covered. But delivering the training itself requires integration with KnowBe4, Proofpoint Security Awareness, or a comparable platform. An RMM can tell you that a user’s risk score is 82 and they have not completed their quarterly training module. It cannot be the training platform. The monitoring is native; the delivery is a vendor integration problem.

CIS 15 (Service Provider Management): Multi-tenant architecture and integration audit trails provide some coverage — you can see what API keys exist, what integrations are active, when they were last used. But comprehensive third-party risk management — vendor questionnaires, contract review, SOC 2 report collection, supply chain risk assessment — is a GRC platform problem. An RMM is not the right place to store vendor contracts.

CIS 16 (Application Security): Application whitelisting, CVE scanning, and browser extension control cover the endpoint side of application security thoroughly. SAST, DAST, code review, and dependency scanning are developer tooling. The line between “RMM scope” and “developer security tooling” runs through CIS 16, and calling it anything other than what it is would be dishonest.

The honest accounting: 13 of 18 CIS controls at Strong coverage, 3 at Medium (14, 15, 16), and 1 that is out of scope by definition (18: Penetration Testing). The Medium controls all share the same characteristic — they require specialized platform integrations where an RMM can provide the monitoring and coordination layer but not the specialized delivery capability. The right architecture makes those integrations possible and auditable, which is the appropriate boundary.

The Full Feature-to-Control Mapping

| BE Feature | Brain Tool(s) | CIS Control Coverage |

|---|---|---|

| BE-1: File System Intelligence | analyze_disk_usage, disk_cleanup | Brain context (no direct CIS) |

| BE-3: Reliability Scoring | get_fleet_health | Brain context (no direct CIS) |

| BE-6: Change Tracking | query_change_log | Brain context — forensic foundation |

| BE-9: Security Posture Scoring | get_security_posture | CIS 4 (partial), CIS 10 |

| BE-11: Device Context Memory | get_device_context, set_device_context | Brain context (no direct CIS) |

| BE-14: Agent Log Shipping | search_agent_logs, set_agent_log_level | CIS 8.2, 8.5 (agent operational logs) |

| BE-15: App Whitelisting | get_software_compliance, manage_software_policy, remediate_software_violation | CIS 2.5, 2.6, 2.7 |

| BE-16: Vulnerability Management | get_vulnerability_report, get_device_vulnerabilities, remediate_vulnerability | CIS 7.1, 7.2, 7.4 |

| BE-17: Privileged Access (PAM) | request_elevation, get_elevation_history, revoke_elevation | CIS 5.4, 6.1, 6.2 |

| BE-18: New Device Alerting | get_network_changes, acknowledge_network_device | CIS 1.1, 1.2, 13.3 |

| BE-19: IP History Tracking | get_ip_history | CIS 1.1, 1.2 (forensic) |

| BE-20: Central Log Search | search_logs, get_log_trends | CIS 8.2, 8.5, 8.9, 8.11 |

| BE-21: Audit Baselines | get_audit_compliance, apply_audit_baseline | CIS 8.1, 8.2, 8.3, 8.4, 8.5 |

| BE-22: Huntress Integration | get_huntress_status, get_huntress_incidents | CIS 10.1, 10.2, 13.1, 13.2 |

| BE-23: SentinelOne Integration | get_s1_threats, s1_isolate_device, s1_threat_action | CIS 10.1, 10.2, 13.1 |

| BE-24: Sensitive Data Discovery | scan_sensitive_data, get_sensitive_data_report | CIS 3.1, 3.2, 3.7, 3.11 |

| BE-25: USB/Peripheral Control | get_peripheral_activity, manage_peripheral_policy | CIS 3.9, 3.10 |

| BE-26: CIS Hardening Baselines | get_cis_compliance, get_cis_device_report, apply_cis_remediation | CIS 4.1 through 4.12 |

| BE-27: Browser Extension Control | get_browser_security, manage_browser_policy | CIS 9.1, 9.4 |

| BE-28: DNS Security Integration | get_dns_security, manage_dns_policy | CIS 9.2 |

| BE-29: Backup Verification | get_backup_health, run_backup_verification, get_recovery_readiness | CIS 11.1, 11.2, 11.3, 11.4, 11.5 |

| BE-30: Network Device Config | get_network_device_configs, get_network_firmware_status | CIS 12.1, 12.2, 12.6 |

| BE-31: User Risk Scoring | get_user_risk_scores, get_user_risk_detail, assign_security_training | CIS 14.1, 14.2, 14.6 |

| BE-32: Incident Response | create_incident, execute_containment, collect_evidence, get_incident_timeline, generate_incident_report | CIS 17.1, 17.2, 17.3, 17.4, 17.6, 17.7, 17.8, 17.9 |

The features without a direct CIS mapping — reliability scoring, change tracking, device context memory — are not compliance gaps. They are the brain’s context layer: the data that allows the brain to answer why questions and to correlate events across time. Without them, the brain can tell you that a device is running a vulnerable software version. With them, it can tell you that the vulnerability was introduced by a specific software update, that the device’s reliability score dropped in the same window, and that three other devices at the same site have the same pattern. The CIS controls provide the compliance floor. The context layer is what makes the brain useful.

What This Means Architecturally

The mapping table above is not a marketing artifact. It is a constraint on how features must be built if you want the compliance coverage to be real rather than claimed.

Every row in that table represents a feature that follows the same pipeline: agent collector or scheduled job writes structured data to PostgreSQL, API endpoint exposes it queryably, brain tool wraps the endpoint, event stream notifies the brain when state changes. Features that skip steps in that pipeline produce thin compliance coverage. If scan_sensitive_data triggers a scan but stores results only in the brain’s context window, there is no get_sensitive_data_report for a human to review, no audit trail for CIS 3, and no persistent record if the conversation ends or the brain’s context rotates.

The discipline required is the same discipline described in the first post in this series: build the RMM layer correctly before building the brain layer. Every data pipeline that exists at the RMM level is a data pipeline the brain can reason over without paying to collect it on demand. Every API endpoint that exists at the RMM level is a tool the brain can call with deterministic, queryable results. The compliance coverage is a side effect of building the platform correctly — which is the only way the coverage is meaningful.

Breeze is built this way. LanternOps, the brain layer that runs on top of it, does not carry compliance state in its context window. It queries it from the RMM, reasons over it, and acts on it — with every action logged, every approval recorded, and every result written back to a database that persists beyond the conversation. If you are evaluating how to build or extend an RMM with AI capabilities and CIS compliance coverage is a requirement, the question to ask is not “does this platform support CIS Controls?” The question is: for each CIS control, what data pipeline produces the coverage, where is that data stored, and what happens to the audit trail when the AI is not running? The answers to those questions tell you whether the coverage is architectural or aspirational.